Jest Snapshots for API Contract Testing

Jest is a great JavaScript testing tool - it's simple, fast and easy to use. One of the key features that I enjoy using for UI testing is its [snapshot testin](https://jestjs.io/docs/en/snapshot-testing], a quick way of capturing the output of a test and comparing against an expected result. Opinion is mixed on snapshot testing and where it fits into the testing pyramid, but it's a useful tool in the utility belt. One trick to bear in mind is that snapshot testing isn't just useful for testing UI output, you can use it for a variety of purposes. In this post I will demonstrate how it can be used for testing your API contracts.

How Snapshots Work

In a Jest test, use the expect(...).toMatchSnapshot() method - that's it! The first time you run a test with a snapshot assertion, you'll see a new file is outputted into a __snapshots__ folder. This represents the output of your test and should be committed to source control. On subsequent runs, the output of the test is diffed against the version stored in the file system. The test will pass if the outputs are equal. Should they not be, the test fails and produces a diff of expected vs actual recorded output. In the situation where you would like the change to be accepted, say you have redesigned a UI component, then you can run the tests with the -u or --updateSnapshot flag. This will overwrite the snapshot file with the new changes, which are again committed to source control.

API Contract Testing

When building an API it's best to avoid introducing breaking changes where necessary, such as by altering the response that is produced from an endpoint. As a developer I would like fast feedback if a change I make alters the result of any existing endpoint - so that I can quickly and safely iterate on changes. Jest snapshot testing is an excellent fit for this use case, as the data under test is inherently serializable!

Let's demonstrate this in action! First off, I will define an incrediblky simple Node server. It uses an express server to serve up some static data from two API endpoints: /users to get all users, or /user/:id to get a user by its id. Responses will be served up as JSON.

To set up the project:

npm init --yes

npm install --save express jest supertest

Then define server.js

const express = require('express');

const path = require('path');

const users = {

1: {

id: 1,

name: "Sam",

age: 29

},

2: {

id: 2,

name: "Taylor",

age: 10

},

3: {

id: 3,

name: "Cleo",

age: 11

}

};

const app = express();

app.get('/users', (req, res) => {

res.json(users);

});

app.get('/user/:id', (req, res) => {

const id = req.params.id;

const user = users[id];

if (user) {

res.json(user);

}

else {

res.sendStatus(404);

}

});

module.exports = app;

And index.js:

const app = require ('./server');

app.listen(8080, () => {

console.log('server running);

});

Add the following scripts to the package.json to allow starting the express server and running the tests:

"scripts": {

"start": "node .",

"test": "jest"

}

(Are you liking the simplicity of this as much as I am?!)

We'll start off by firstly writing a test against the /users endpoint, by creating a new file: __tests__/server.spec.js. I'll be using the supertest library to assist testing - it allows me to fire off requests against the express server, wait for the response and then make assertions. This ties in nicely with Jest, which natively supports asynchronous tests and if you're running on a recent version of node, you can write async-await syntax out of the box too.

The first test looks as follows:

const request = require('supertest');

const app = require('../server');

describe('the server API', () => {

it('returns all users', async () => {

const response = await request(app).get('/users');

expect(response.body).toMatchSnapshot();

});

});

Run this and you'll notice a new file has been saved at __tests__/__snapshots__/server.spec.js.snap. This is the snapshot output:

// Jest Snapshot v1, https://goo.gl/fbAQLP

exports[`the server API returns all users 1`] = `

Object {

"1": Object {

"age": 29,

"id": 1,

"name": "Sam",

},

"2": Object {

"age": 10,

"id": 2,

"name": "Taylor",

},

"3": Object {

"age": 11,

"id": 3,

"name": "Cleo",

},

}

`;

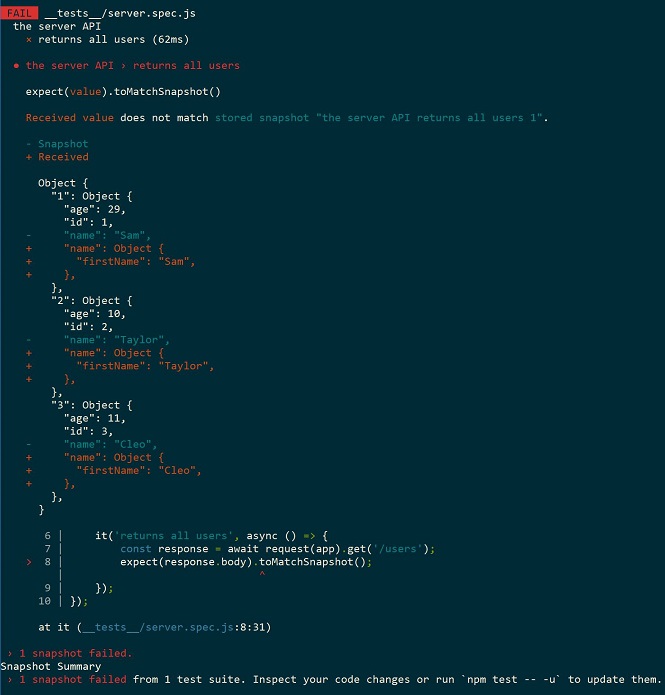

Remarkably, it's incredibly easy to read as it's effectively stringified JSON. Now if I were to change the output, such as changing the structure of the user object, and re-run the tests, I'll get a test failure which looks as follows:

As you can see, the difference has been clearly communicated. Through these tests we have defined and are enforcing the 'contract' that the API should adhere to. Of course, additive changes are typically considered 'fine', in that they won't 'break' existing consumers use of an endpoint. This is true, and for additive changes the above method would note that the output has changed. For these cases a simple re-run with the --updateSnapshot flag can record that this change was intentional.

Your own API boundary might not be the only API contract that you wish to test. Perhaps an API call made to your server causes a request to an upstream system? This use case is common, for example if your API acts as a gateway to a microservice-based backend, or places requests on a message queue, or speaks to mainframe systems, and so on. It may be important in your use case to additionally capture those requests too! In these situations you can then utilise snapshot testing at the integration test layer, utilising your server as a black box, inputting some data and observing the outputs.

Snapshot testing is almost certainly not a pancea - but for this use particular use case, where you're looking to record and diff serializable output for the purpose of regression testing - it is an ideal match. I would highly recommend considering it for use cases other than UI testing!